Integrating large language models (LLMs) in production environments is becoming increasingly common for enterprises looking to enhance their applications with AI capabilities. In this post, we’ll cover key patterns, specific tools, and challenges involved in deploying LLMs effectively.

- Introduction to LLMs

- Deployment Patterns

- Overcoming Challenges

- Choosing the Right Tools

- Real-World Scenarios

Introduction to LLMs

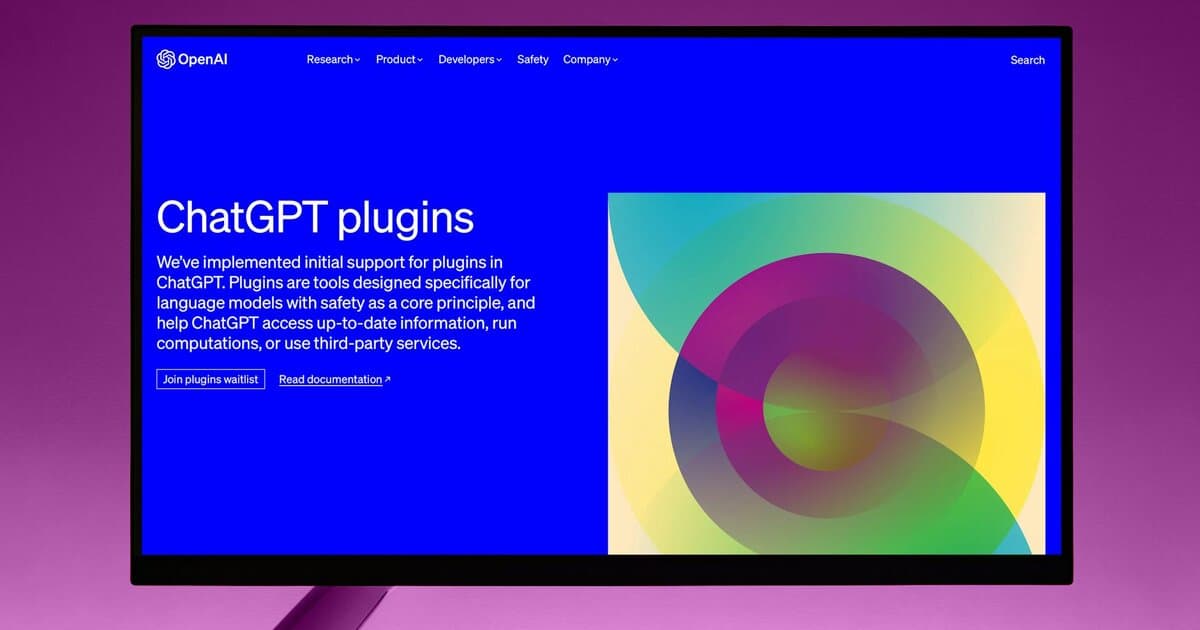

Large language models, such as OpenAI’s GPT or Google’s BERT, are transforming how applications process and understand natural language. These models, powered by AI-first architecture, excel at tasks like text generation, summarization, and sentiment analysis. The integration of LLMs into production systems can elevate an application by providing more intuitive user experiences and automating complex data processing tasks.

Despite their capabilities, integrating LLMs into production is not without its challenges. It’s crucial to understand the underlying architecture and capabilities of these models before attempting to integrate them into enterprise systems. For high-performance applications, engineers might look into optimizing data throughput and minimizing latency, which often requires a deep understanding of both the model and the infrastructure.

At Champlin Enterprises, our experience shows that the key to successfully integrating LLMs is in aligning their capabilities with specific business objectives. This requires not just a technical understanding but also a strategic vision of where AI adds the most value.

Deployment Patterns

There are several patterns for deploying LLMs in production, each with its trade-offs. One common approach is using a microservices architecture, where the LLM is a separate service accessed via APIs. This pattern allows for scaling and updating the LLM independently of the rest of the application. Considerations here include latency and network overhead, which can be mitigated by implementing efficient API gateways and load balancing techniques such as Kubernetes service mesh.

Another pattern involves embedding LLMs directly into applications as libraries, which increases speed by reducing API call overhead but can complicate version management and scaling. This approach is suitable for applications where low latency is critical, such as real-time text generation tools.

For batch processing tasks, such as large-scale sentiment analysis, a queue-based pattern can be effective. Here, tasks are placed in a queue and processed asynchronously using tools like Apache Kafka or RabbitMQ. This pattern allows for handling large volumes of data while ensuring that processing resources are optimally used.

Overcoming Challenges

Deploying LLMs in production is not without its pitfalls. Key challenges include managing resource consumption, ensuring model accuracy, and addressing ethical concerns related to AI. LLMs are computationally intensive, often requiring specialized hardware like GPUs or TPUs, which can drive up costs. Optimizing resource allocation with cloud providers’ auto-scaling features can mitigate some of these costs.

Ensuring model accuracy in real-world applications involves continuous monitoring and retraining. Data drift, where incoming data diverges from the model’s training data, can degrade performance. Implementing feedback loops and leveraging platforms like TensorFlow Extended (TFX) can help maintain model accuracy over time.

Ethical considerations are equally important. LLMs can produce biased or inappropriate content if not carefully managed. Techniques such as bias detection and regular auditing of model outputs are essential practices for maintaining ethical standards in AI applications.

Choosing the Right Tools

Selecting the right tools for LLM integration can significantly impact the success of your deployment. Popular frameworks like Hugging Face’s Transformers provide robust APIs for working with various LLM architectures and are a good starting point for most implementations. If you’re looking for end-to-end solutions that also cover deployment, tools like Selene AI provide model serving capabilities alongside optimization and monitoring features.

For infrastructure management, Kubernetes is a natural choice for container orchestration, offering a scalable environment for deploying AI models. Additionally, using MLOps platforms such as MLflow or Kubeflow can streamline the deployment, monitoring, and management of models.

Incorporating proper observability tools is crucial. Tools like Prometheus or Grafana can provide valuable insights into model performance metrics, helping identify bottlenecks and optimize resource use. These tools can be integrated into a larger observability stack as part of a comprehensive monitoring strategy.

Real-World Scenarios

Consider a customer support system enhanced with an LLM to analyze and categorize incoming queries. An architecture using microservices can ensure that the language model scales independently, serving multiple departments without degrading response times. For example, caching predictions can enhance speed, using Redis or Memcached to store results of frequently accessed queries.

In content management systems, integrating LLMs can automate content tagging and summarization, reducing manual effort and enhancing searchability. This is particularly effective in environments with high content turnover, such as news platforms or e-commerce sites.

At Champlin Enterprises, we’ve seen success in client engagements where LLMs are used to preprocess data for machine learning pipelines, improving the quality and relevance of input data for more accurate downstream analyses. Each scenario requires a tailored approach, emphasizing the importance of aligning technological capabilities with business goals.

Integrating LLMs into your applications can offer significant enhancements when done correctly. Understanding the deployment patterns, choosing the right tools, and anticipating challenges are critical for successful implementation. If this is an area you’re exploring, it’s worth a conversation to see how our engineering services can support your integration efforts. Let’s talk.