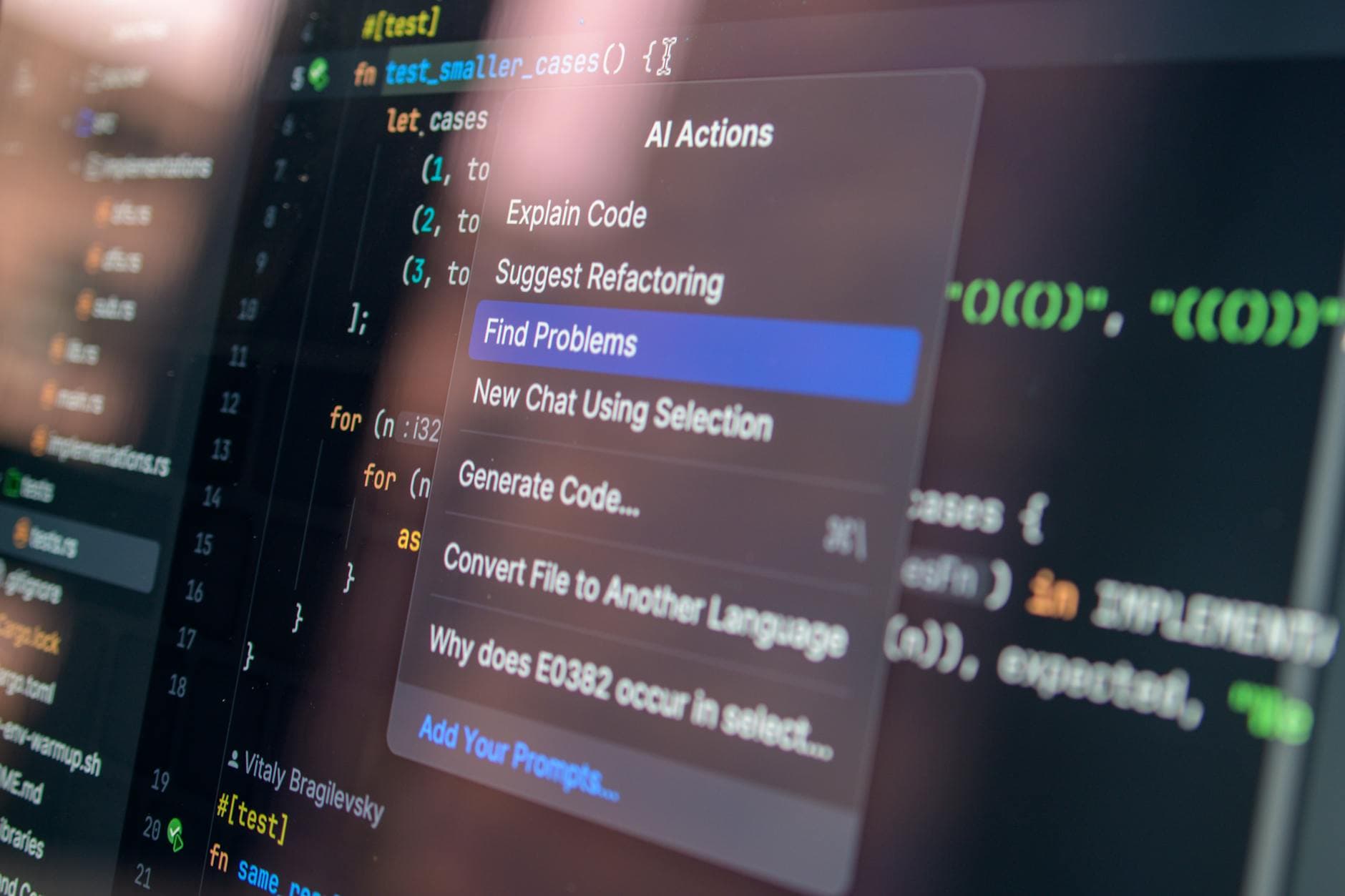

I saw a post in a Claude AI community group this week that stopped me mid-scroll. Someone asked: “Is anyone else having issues with Claude sabotaging your projects at 95% completion?!”

The post had 35 comments, 14 likes, and a wave of people chiming in with opinions ranging from “Claude broke my app” to “you’re just prompting it wrong.” It’s a conversation I’ve seen dozens of times in AI coding communities. And it’s one that deserves a clear, experience-backed answer.

There is no sabotage happening.

Claude — or any AI coding assistant — doesn’t have an agenda. It’s not getting bored at 95% and deciding to torch your work. It doesn’t remember that it spent the last three hours helping you and think, “You know what? Let me undo all of this.” That’s not how these tools work. What is happening is more nuanced, more fixable, and honestly more your responsibility to manage than you might want to hear.

What’s Actually Happening at 95%

Here’s the pattern I see repeatedly: Someone starts a project with an AI coding assistant. The first 70-80% goes smoothly. Features come together, the app looks great, everything seems to be working. Then they hit the integration phase — where all the pieces need to actually connect, share state, handle edge cases, and work as a system — and suddenly the AI starts “breaking things.”

One commenter in the thread nailed it: “You think you’re building an app but you’re not. You’re building a frame and haven’t wired the data correctly. Most projects fail at this point because they’re built on shifting sand.”

That’s not sabotage. That’s the natural consequence of a few specific, addressable problems.

Problem 1: Context Window Limitations

This is the biggest one, and most people don’t realize it’s happening. AI models work within a context window — a fixed amount of text they can “see” at any given time. When your project is small, the AI can hold your entire codebase in context. It understands every file, every function, every relationship between components.

At 95% completion, your project is larger. Significantly larger. The AI can no longer hold everything in context simultaneously. So when you ask it to make a change, it’s working with incomplete information. It might modify a component without understanding how that component connects to three other files it can no longer see. The result looks like sabotage. It’s actually amnesia.

The fix: Be explicit about context. When you’re deep into a project, don’t just say “fix the login flow.” Provide the relevant files. Explain the data flow. Tell the AI what it can’t see. I maintain project documentation files — CLAUDE.md files — that give the AI a map of the entire architecture so it can make informed decisions even when it can’t read every file.

Problem 2: Accumulated Technical Debt From Accepting Everything

Here’s a habit I see constantly: someone accepts every suggestion the AI makes without reviewing it. The code works in isolation, so they move on. But each accepted suggestion might introduce a slightly different pattern, a redundant state variable, or a subtle inconsistency in how data flows through the app.

For the first 70% of the project, these inconsistencies don’t matter. Everything is isolated enough that it works. At 95%, when you’re wiring everything together, those accumulated small decisions create conflicts. The AI isn’t sabotaging your project — it’s tripping over the technical debt you let it create earlier.

The fix: Review AI-generated code the same way you’d review a junior engineer’s pull request. Understand what it wrote and why. If you don’t understand a piece of code, don’t accept it. Ask the AI to explain it. Refactor as you go, not at the end.

Problem 3: Vague Prompts That Worked Early Don’t Work Late

When your project has three files, you can say “add a login page” and the AI figures it out. There’s only one reasonable interpretation. When your project has forty files, “add a login page” has dozens of interpretations. Which auth pattern? Which state management approach? How does it integrate with your existing navigation? Where does session data live?

The AI makes its best guess. If that guess doesn’t match your mental model of how the app works — and you haven’t communicated that mental model — the result feels like the AI is working against you.

The fix: Your prompts need to scale with your project’s complexity. Early on, high-level instructions are fine. As the project grows, your instructions need to be more specific: reference specific files, describe the expected data flow, name the functions that should be called. Think of it like delegating to a teammate — the bigger the codebase, the more context they need.

Problem 4: You Built a Frame, Not a System

This is the hardest one to hear. Some projects that “break at 95%” were never really 95% complete. They were a collection of screens and components that looked finished but weren’t actually connected into a working system. The routing existed but data wasn’t flowing through it correctly. The UI was polished but the state management was held together with duct tape.

AI tools are excellent at generating individual components. They’re less reliable at maintaining architectural coherence across an entire application — especially if you never established that architecture clearly in the first place. The AI was building what you asked for: individual pieces. The system-level integration was always going to be the hard part, with or without AI.

The fix: Start with architecture, not features. Before you write a single component, define your data models, your state management approach, your routing strategy, and your API contracts. Give this to the AI as foundational context. When every component is built against the same architectural plan, integration at 95% is straightforward, not catastrophic.

Practical Tips From Someone Who Ships With AI Daily

I use Claude every day to engineer production software. Not toy projects — real SaaS applications with paying users. Here’s what I’ve learned about keeping AI effective through the entire lifecycle of a project:

- Maintain a project knowledge file. I keep a CLAUDE.md file in every project root that documents the architecture, key directories, data flow, conventions, and anything else the AI needs to make good decisions. This is non-negotiable for any project beyond a weekend hack.

- Work in small, focused increments. Don’t ask the AI to build five features at once. One feature, one file at a time. Test it. Commit it. Then move on. This keeps context manageable and prevents the “everything breaks at once” scenario.

- Use version control religiously. Commit after every working change. If the AI does make a bad change, you can revert to a known-good state in seconds. If you’re not using git, you’re flying without a parachute.

- Read what it writes. I cannot stress this enough. If you’re accepting AI-generated code without reading it, you’re not engineering software — you’re gambling. Every line the AI generates should make sense to you before it goes into your codebase.

- Provide context, not just instructions. Instead of “fix the bug,” try “the login form in src/components/LoginForm.tsx is submitting but the session token from the auth API response isn’t being stored in the context provider at src/contexts/AuthContext.tsx.” More context means better results, every time.

- Don’t start from scratch when things go wrong. When the AI makes a bad change, your instinct might be to start a new conversation and try again. Resist that. Instead, explain what went wrong, why it went wrong, and what the correct approach is. The AI learns within the conversation. Starting fresh throws away all that context.

- Establish patterns early. In the first 10% of the project, be extremely deliberate about the patterns you use. How you handle API calls, state management, error handling, and component structure in the early code becomes the template the AI follows for everything else.

The Real Issue: AI Tools Require Engineering Skill

Here’s the uncomfortable truth underlying all of these “Claude sabotaged my project” complaints: AI coding assistants are force multipliers for engineering skill. If you have strong architectural thinking, good debugging instincts, and a clear vision for your system, AI makes you dramatically faster and more productive. If you don’t have those skills yet, AI can help you build impressive-looking things that fall apart under pressure.

That’s not a criticism — everyone starts somewhere. But it’s important to be honest about what these tools are. They’re not autopilot. They’re more like a very fast, very knowledgeable junior engineer who will do exactly what you tell them to, even if what you told them to do is wrong. The senior engineer’s job — your job — is to direct the work, review the output, and maintain the architectural vision.

Claude isn’t sabotaging your project. It’s showing you where your engineering process needs to level up. And that’s actually valuable feedback, if you’re willing to hear it.

If you’re building something with AI tools and hitting walls like this, reach out. This is exactly the kind of problem that benefits from having a senior engineer in your corner — someone who can look at your architecture, identify what’s actually breaking, and help you build systems that hold together from 0% to production.